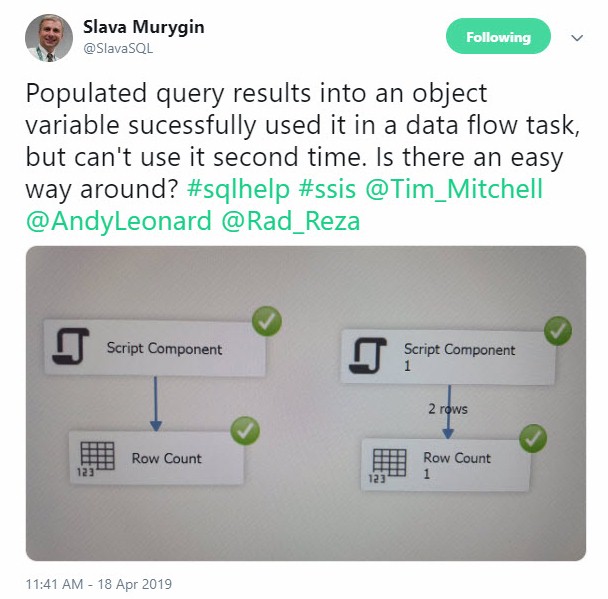

My friend Slava Murygin (@SlavaSQL) recently asked a question on Twitter:

Populated query results into an object variable sucessfully used it in a data flow task, but can’t use it second time. Is there an easy way around?

Tim Mitchell (Blog | @Tim_Mitchell | Tim’s post: Temp Tables in SSIS) and I engaged. You can read the thread here. Spoiler: Tim and I agree that staging data temporarily in a work table is a good solution.

“Good Solution?”

Like all SSIS solutions (and software design solutions, and life solutions), staing data temporarily in a work table is not the only solution. Why do Tim and I agree on work tables? My best answer is, it reduces the total cost of ownership.

What are the Other Solutions?

There are several alternative solutions. You could stage data temporarily in a Recordset Destination. There’s a way to make SSIS work with tempDB. You can stage to a Raw File. You can use an SSIS Cache (though I believe this remains an Enterprise-only feature). There are yet other solutions.

“Why do You Advocate for Work Tables, Andy?”

I’m glad you asked. Work tables are:

- Simple

- Fast

- Understood by almost every SSIS developer, analyst, and DBA

Simple

A work table is a table defined in a nearby data location; either a schema in the source or target database or in a database on the same instance. I take a constraint-driven approach to work table location selection. Closer – a schema in the same database – is often better for performance.

I write this knowing some folks will frown at the suggestion of polluting a data source or target database with additional schemas and tables. Best practices exist for a reason. It’s helpful to maintain a list of best practices and to include in this list the reasons each practice exists. This could be a case where violating one or more best practices is justified.

In some cases – like when interacting with databases for third-party solutions – adding schemas and tables is a bad idea (or violation of an EULA). In those cases, stand up a work database on the same instance and place the work table there, unless…

Some data integration design patterns require joining the work table to a source or target table, and some relational database engines do not support three-part naming in SQL queries. My suggestion in those cases is to be creative.

In my humble opinion, “we’ve always / never done it that way” is a warm and open invitation to explore why it’s always / never been done that way.

Fast

A work table should be used by the data integration process during data integration execution. It should only be queried occasionally, and only by development or support personnel. I refer to this state as owned, and say things like, “WorkTable1 is owned by the data integration process.” Note: ownership has security implications, even in Production.

Since the data integration process owns the work table, developers should be able to use an OLED DB Destination configured for fast load (if supported by the provider) to populate a work table. This will make staging temporary data very fast. The data integration process should be able to truncate and manipulate data in a work table based on the requirements of the load pattern.

Easily Understood

Data loaded to a work table may be persisted between package executions. If something unfortunate happens, development and operations personnel may query the table to see data that was persisted – and the state in which it was persisted – during the previous execution.

Data in a work table is accessible using SQL. Not everyone understands SSIS. Almost everyone working around data understands SQL syntax.

One thought on “Staging Data Temporarily in an SSIS Data Flow”