I recently read a social media post from a data engineer that I found both encouraging and heartbreaking. This data engineer shared that he used an AI engine to successfully convert an SSIS package, roughly 1MB in size, into a different data engineering platform. Why encouraging? First, it is genuinely encouraging that AI engines are …

Continue reading Separating Concerns in SSIS

Category:SSIS Design Patterns

Laid Off? Or the Beginning of Your Next Opportunity?

Updates: The “opp23” limited-time offer has expired. I may make similar offers in the future. The best way to learn of offers from me is to subscribe to my mailing list and check the checkbox labeled “I would like to receive more information about products, services, events, communications, and offers.” If you signed up for …

Continue reading Laid Off? Or the Beginning of Your Next Opportunity?

Video-Follow-up on SSIS Framework Restartability

A couple days ago I delivered a short demonstration about SSIS Framework application restartability. I saw one thing I did not like – a bug – and I mentioned another bug I witnessed. Yesterday, Kent Bradshaw and I had another opportunity to work on the SSIS Framework code, so we fixed the glitches. Enjoy this …

Continue reading Video-Follow-up on SSIS Framework Restartability

Video-Use the SSIS Framework to Restart ETL

I did a video earlier today where I shared SSIS Framework’s Application restart functionality. Enjoy!

Video-Starting a Demo SSIS Project

I started building a new demo SSIS project and thought, “I wonder if anyone might be interested in learning more about what I’m doing here?” I am not sure. I went live and recorded the session, just in case. Enjoy! :{>

Install and Configure SSIS Framework File Community Edition

I recently released SSIS Framework File Community Edition. In this video, I walk through obtaining the code and deploying the components of this free and open-source solution. You can obtain the code at github.com/aleonard763/SSIS-Framework-File-Community-Edition. Note: I tried some new settings in OBS Studio. Let me know what you think! Enjoy! :{>

Join Me for LIVE Online SSIS and ADF Training 29 Nov – 1 Dec

It’s been a while since I delivered training live and online. Let’s fix that! Join me for Master the Fundamentals of ADF and Expert SSIS 29 Nov – 1 Dec.

Intelligent Data Integration Using Hashed Incremental Loads

Coming soon to Premium Level: Intelligent Data Integration Using Hashed Incremental Loads. Also coming soon: A price hike. Enjoy this 2:20 preview and then visit the Premium Level page to sign up (before the price goes up). :{>

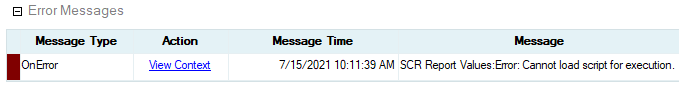

SSIS Script Error: Cannot Load Script for Execution

If I had a nickel for every time I’ve encountered “Cannot load script for execution,” I’d have a lot of nickels. In this short (7:24) video, I demonstrate the issue and my fix: My Fix For me, the fix is almost always setting the SSIS project’s TargetServerVersion property to the proper version: “Andy, How Did …

Continue reading SSIS Script Error: Cannot Load Script for Execution

Join me for a SQLBits Replay of Faster SSIS 15 Jul!

I am honored to join viewers for a SQLBits Replay of my session: “Faster SSIS” 15 Jul 2021 at 8:00 PM BST (3:00 PM EDT)! Visit SQLBits for more information! :{>