First, a couple shoutouts to my brothers and friends, Joel Cochran (@joelcochran) and Shannon Lowder (@shannonlowder). This approach grew out of conversations with them.

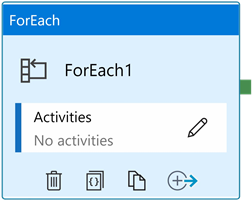

The ForEach activity iterates over a collection of items. One less-documented property of the ForEach activity is it (currently) iterates the entire collection. I can hear some of you asking…

“What if something fails inside the ForEach activity’s inner activities, Andy?”

That is an excellent question! I’m glad you asked. The answer is: The ForEach activity continues iterating.

I can now hear some of you asking…

“What if I want the ForEach activity to fail when an inner activity fails, Andy?”

Another excellent question, and you’ve found a post discussing one way to “break out” of a ForEach activity’s iteration.

One caveat regarding the solution that follows: this solution halts the execution of the pipeline. If you wish to have the pipeline

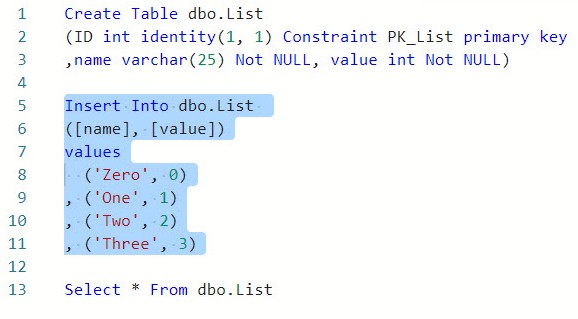

First, a List

My list is a collection of name-value pairs retrieved from an Azure SQL database table named dbo.List:

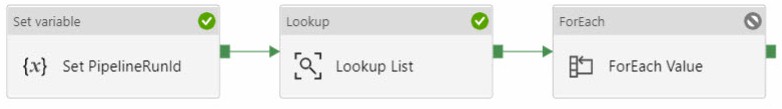

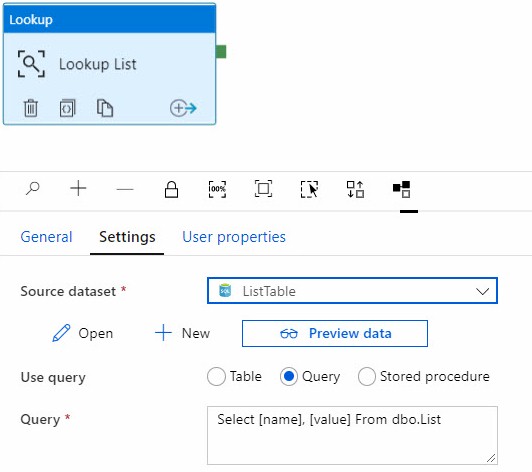

Next, I create a new ADF pipeline and add a Lookup Activity to the pipeline canvas. I named my Lookup activity “Lookup List” and configured the settings to return the name-value pairs in the dbo.List table:

After this, I add a ForEach activity to the pipeline and connect a Success output from the “Lookup List” lookup activity to the new ForEach activity. I rename the ForEach activity “ForEach Value” and configure the following settings on the Settings tab:

- Sequential: Checked

- Items:

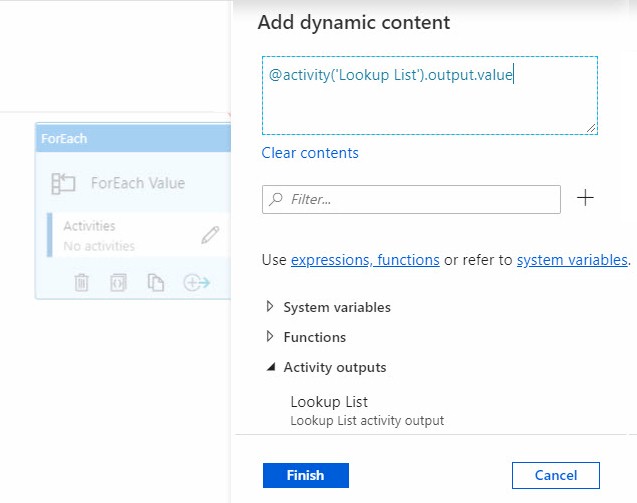

- Click the “Add dynamic content [Alt + P]” link

- When the Add dynamic content blade displays, select Activity outputs > Lookup List

- The expression displays “@activity(‘Lookup List’).output”

- Append “.value” to the express so that the expression reads “@activity(‘Lookup List’).output.value”

Click the Finish button.

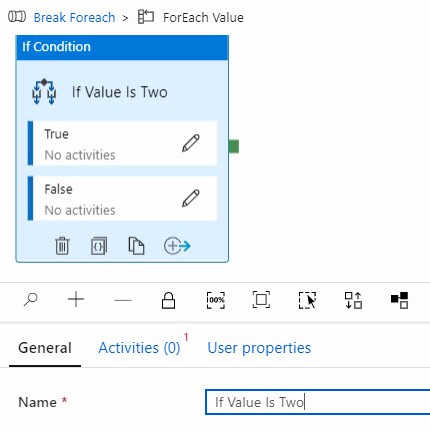

Click the “ForEach Value” activity’s Activities edit icon (pencil) to open the ForEach activity’s inner activities canvas. Drag an If Condition onto the canvas and rename it “If Value Is Two”:

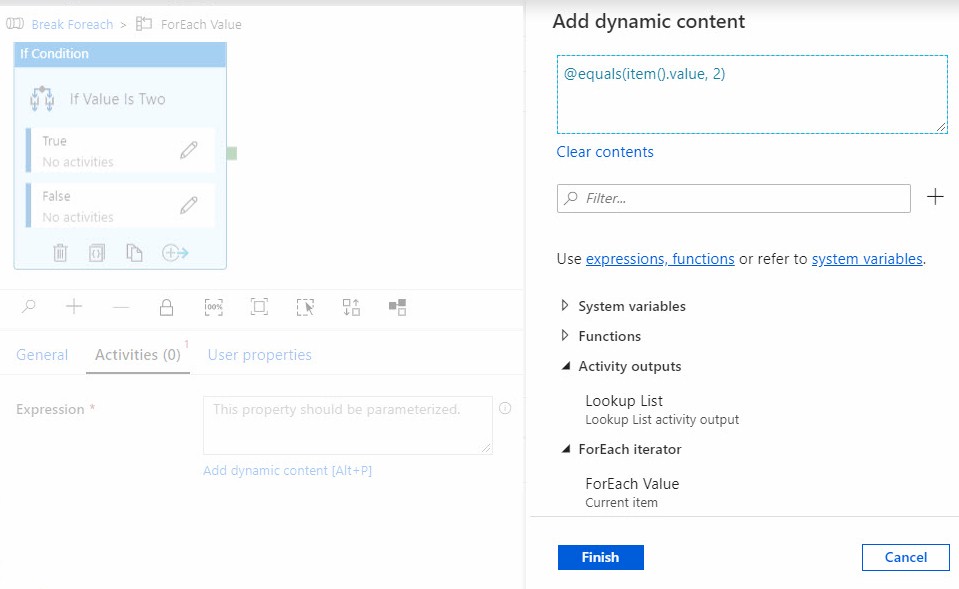

On the “If Value Is Two” If condition’s Activities tab, configure the Expression property’s dynamic content, setting it to @equals(item().value, 2):

Click the Finish button.

Click the “Break Foreach” pipeline name in the breadcrumbs:

![]()

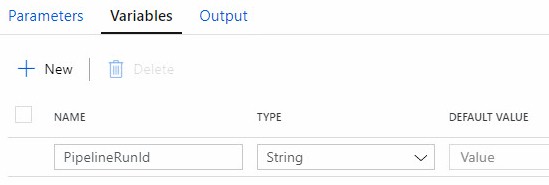

Click the pipeline Variables tab and add a new String variable named PipelineRunId:

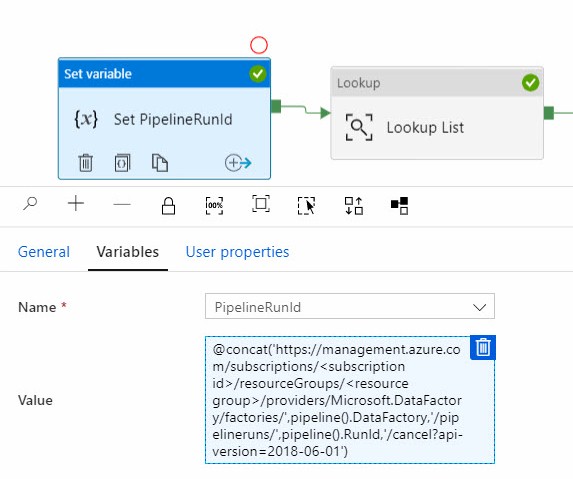

Drag a Set variable activity onto the pipeline canvas and rename it “Set PipelineRunId”. On the Variables tab, select the PipelineRunId variable from the Name dropdown. Click the “Add dyanmic content [Alt + P]” link beneath the Value property and enter an expression similar to:

@concat(‘https://management.azure.com/subscriptions/<subscription id>/resourceGroups/<resource group>/providers/Microsoft.DataFactory/factories/’,pipeline().DataFactory,’/pipelineruns/’,pipeline().RunId,’/cancel?api-version=2018-06-01′)

A Couple / Three Notes…

Note 1: The expression listed above builds a call to the Azure Data Factory REST API. Specifically, to the Cancel method for Pipeline Runs. You must supply the portions shown in italics for:

- Your subscription Id

- Your Resource Group name

The expression will fill in the blanks for your data factory name and the RunId value for the pipeline’s current execution.

Note 2: By default, Azure Data Factory is not permitted to execute ADF REST API methods. The ADF managed identity must first be added to the Contributor role. I describe the process of adding the ADF managed identity to the Contributor role in a post titled Configure Azure Data Factory Security for the ADF REST API.

Note 3: When running in Debug, pipelines may not be cancelled. Pipelines must be triggered (manual triggers work) to be accessible to the REST API’s Pipeline Runs cancel method.

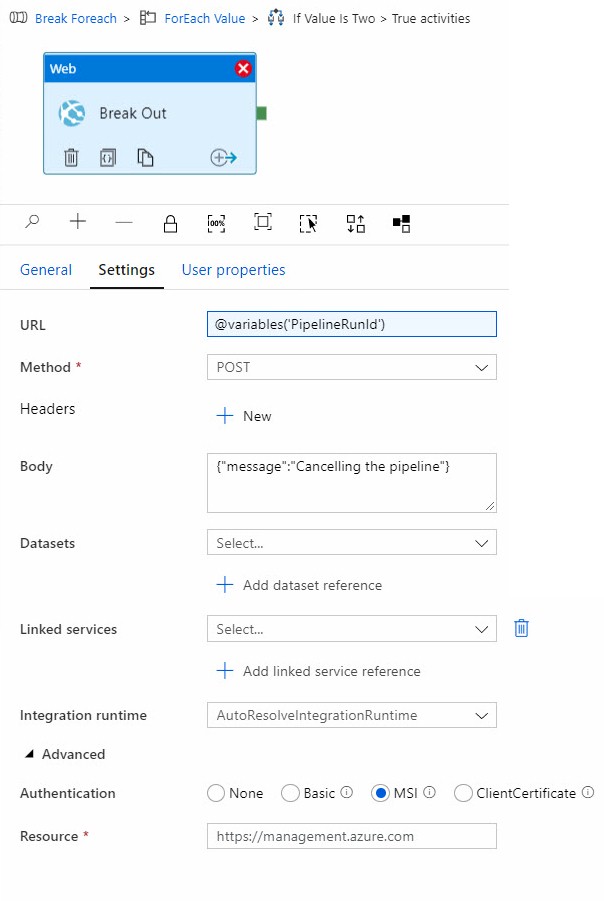

Navigate to the “ForEach Value” activities > “If Value Is Two” True activities. Add a Web activity to the canvas and rename the web activity “Break Out”. On the Settings tab, click inside the URL property, click the “Add dynamic content [Alt + P]” link, and then select Variables > PipelineRunId. Select “POST” for the Method property. The Body property displays; enter a message key-value in JSON (similar to {“message”:”Cancelling the pipeline”}). Expand the Advanced section and select the MSI option. When the Resource property displays, enter “https://management.azure.com” in the property value textbox:

Click the Publish All toolbar item if it is enabled (and includes a number-bubble).

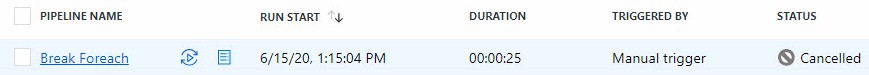

Click the Add Trigger > Trigger Now option to trigger the last-deployed-version of the pipeline. If all goes as planned, the pipeline execution should cancel itself. On the ADF Monitor page, a cancelled pipeline execution appears as shown here:

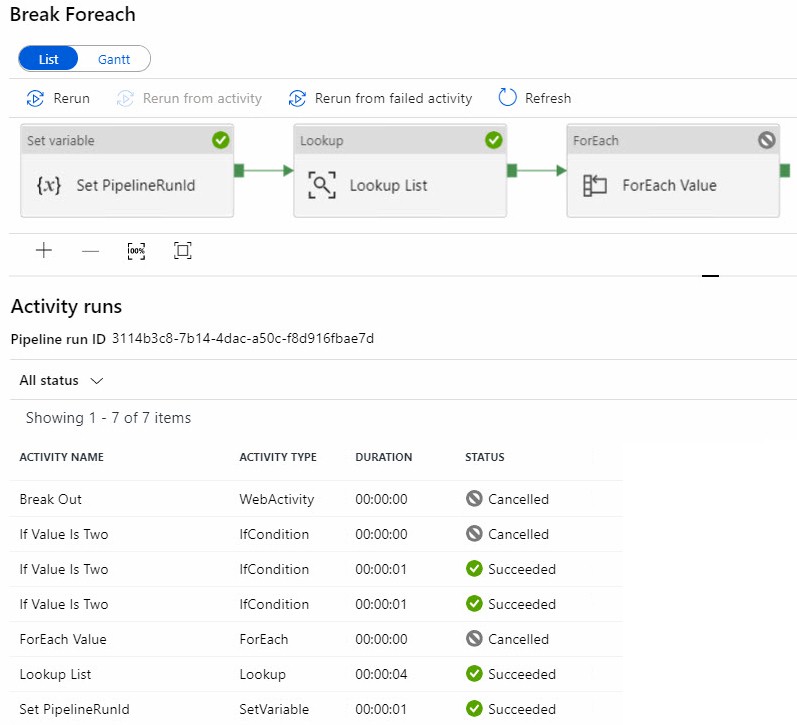

Clicking the “Break ForEach” link in the Pipeline Name column displays runtime details:

Note the “If Value Is Two” Conditional If activity executed three times – once each for the values 0, 1, and 2 – before cancelling the pipeline in the “Break Out” web activity. Note the ForEach activity stopped iterating, and the value 3 was never evaluated because the pipeline was cancelled when iteration reached the value 2.

Mission Accomplished

What did we do?

- We populated a pipeline variable (PipelineRunId) with a URL that invokes the cancel pipeline runs method of the ADF REST API.

- We loaded a dataset via a T-SQL query – executed inside a Lookup activity – against a table named dbo.List.

- We iterated the values returned to the Lookup activity.

- We used an If Condition activity to check for a value of 2.

- If we found the value 2, we executed the ADF REST API invocation of the cancel pipeline runs method contained in the pipeline variable named PipelineRunId.

- We used an If Condition activity to check for a value of 2.

Conclusion

I can hear some of you thinking, “But Andy, what if I want to other operations after deciding I want to break out of the ForEach activity but before the pipeline is cancelled?” That’s an excellent question. The answer is: “There’s a book and a class that covers that particular design pattern, but neither have been released (at the time of this writing…).”

<ShamelessPlug>

Need Help Getting Started with ADF?

Enterprise Data & Analytics specializes in training and helping enterprises modernize their data engineering by lifting and shifting SSIS from on-premises to the cloud. Our experienced engineers grok enterprises of all sizes. We’ve done the hard work for large and challenging data engineering enterprises. We’ve earned our blood-, sweat-, and tear-stained t-shirts. Reach out. We can help.

</ShamelessPlug>

Hi Andy,

I have been looking for months for an article like this one. Thank you so much for posting!

Regards,

Andrew

I keep getting error as “code”:”ReferencedPipelineRunNotFound” when the web API call is made to cancel. but when I check the URL that got constructed as part of PipeRunId the values are correct. I manually checked the runId and it match. Did you face such a problem?

Hi Naveen,

This happened to me when I executed the pipeline in Debug. For me, this only works when I trigger the pipeline execution manually or using a scheduled or event trigger. If the pipeline execution isn’t visible on the monitoring page, I do not believe the cancel command can find it.

Hope this helps,

Andy

Thanks for the reply. Lets say I call a pipeline (PL1) from another pipeline (PL2). If PL1 is canceled based on some condition I see that PL2 is also getting cancelled. How this can be avoided?

That’s a good question, Naveen! I do not know the answer to it at present, but my first line of inquiry would be to test calling PL2 from the REST API. If that didn’t provide enough decoupling to allow PL2 to continue executing even though PL1 is cancelled, I’d inject a different method to execute PL2 – perhaps a fire-and-forget Azure function would provide enough decoupling? Just a thought.

Thank You Andy for your posting! This article solved the challenge for my use case. Thumbs up! =)

Is this Engineering?

Or yet another workaround of ADF limitations forcing accidental complexity in design?

What’s the effect on maintainability and onboarding of new developers when the data kiosk becomes a data warehouse full of these workarounds?

What about acknowledging these limitations as a negative side of the trade-off when choosing low-code?

For decades we have been doing:

foreach (var value in values)

{

if (value == 2)

{

return;

}

}

Simple.

Some other limitations in ADF:

Not able to have

– more than 40 activities in a pipeline.

– nested if, or a loop inside an if.

– logical OR as a dependency constraint (even SSIS supported that one!)

– …

I’m going to buy your book, and recommending it, if it includes a well balanced chapter on the tradeoffs of low-code, and what kind of projects these tools are suited for, and when the limitations are not worth it. Happy to be pointed in the right direction if this chapter already exists!

Hi Andreas,

Thanks for reading this post and sharing your thoughts. I am uncertain this side-effect can be wholly (or even partially) attributed to low-code. To me, it seems to be missing functionality.

If I had the ability to build custom ADF activities, I could be more proactive. I could, perhaps, design a better Foreach activity. While I can design and share custom SSIS tasks by encapsulating functionality that can be done in an SSIS Script Task into a redistributable control, ADF currently does not support such encapsulation. I can, however, invoke APIs, call Azure Functions in ADF, and otherwise engage functionality outside of ADF proper to accomplish much flexibility; much like the first steps of defining custom functionality inside an SSIS Script Task.

To answer your opening question: “Yes. This is engineering.” I am making use of available technology to solve a problem.

To my knowledge, none of my books contain a “chapter on the tradeoffs of low-code, and what kind of projects these tools are suited for, and when the limitations are not worth it,” so you may better spend your money purchasing books by other authors.

It sounds as if the proper use for low-code is a topic about which you are passionate. I encourage you to share more on the topic (if you haven’t already). Please let me know and I will be honored to pass along links to my readers. If you are interested in writing a book on the topic, I would be honored to introduce you to my editor at Apress; he’s one of the best in the business.

In my books and blog posts and presentations, I attempt to share some problems I’ve overcome and how I overcame them. In this limited manner, I try to help. This does not mean that this is the only way to help, but it does mean that this is my way to help, or at least, attempt to help.

I encourage you (and everyone) to go and do likewise.

Thank you for spending your time and giving a good reply. Also, thanks for helping others with possible solutions.

Sure, this is missing functionality, and could be implemented in a low-code tool. But will these tools ever get to a point where simple stuff like this is as simple as with a general purpose language like C#?

I’ll guess I’m struggeling with finding out why ADF is worth it, when you have to code your own foreach to be able to break out of it.

I’m not saying that SSIS and ADF can’t be the right tools for certain teams and projects, just that there are tradeoffs, and big workarounds for breaking a foreach seems like a good place for pointing some

of them out.

Guess we have different views on what’s engineering.

I respect that you would rather focus on the how’s, and not the why’s of GUI ETL tools.

Yes, I’m passionate about learning effective data management, finding out when and how to create maintainable systems, and how to get data warehousing projects successful. Seems like unsolved problems.

Maybe I’ll contact Apress when I’m more experienced like you. Here is a post written by someone else having more than me: [The Rise of the Data Engineer – ETL is changing](https://www.freecodecamp.org/news/the-rise-of-the-data-engineer-91be18f1e603/).